AI models eat money fast. You run prompts, and your server bills skyrocket. Do you know where your budget actually goes when you generate a single AI token?

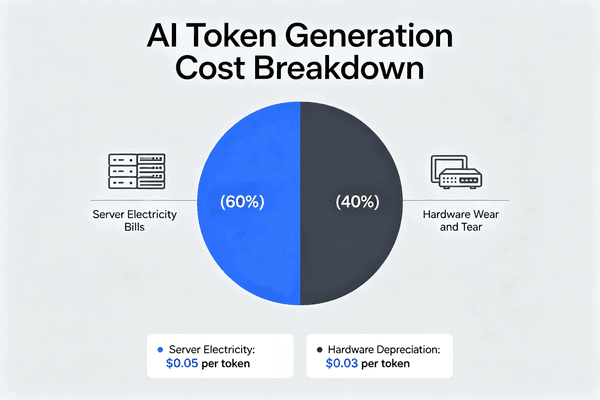

Token generation cost1 is the total money spent to produce AI text or data. It mainly includes server electricity bills2 and the daily wear and tear of expensive hardware like GPUs. Calculating this cost per 1000 tokens helps businesses track their actual AI operating expenses3 accurately.

Understanding this hidden cost is not just a math trick. It is the secret to keeping your AI projects alive. Let us break down the exact parts that drain your wallet and see how you can fix them, before high costs force your business to shut down.

How Do Electricity and Hardware Depreciation Define Token Costs?

High AI bills frustrate many procurement teams. You buy expensive chips, but the daily costs still shock you. How exactly do power and hardware aging create these token expenses?

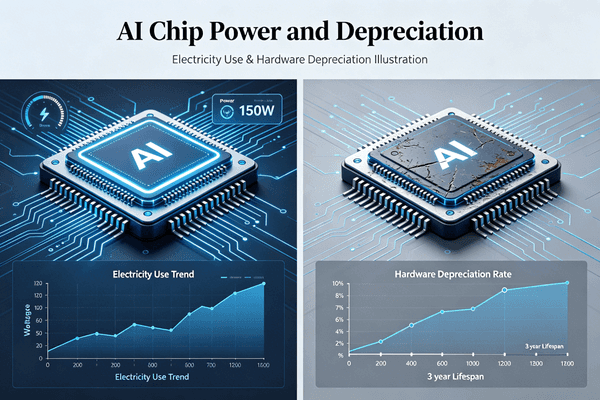

The true cost per 1000 tokens relies on two factors. First, the electricity your servers use during data processing. Second, the hardware depreciation4, which means the lost value of your chips over time. Together, these form the baseline cost of running any AI model.

The Hidden Math Behind 1000 Tokens

I remember a meeting with a hardware engineer last year. He was shocked by his data center bills. His company made a popular AI tool. We sat down and looked at the real numbers. We found that buying the chips is only the first step. The real pain comes later. When you run an AI model, the chips work very hard. They draw a huge amount of power. This power turns into heat. Then, you need even more power to cool the room. This electricity bill grows every single minute. At Nexcir, we see this problem all the time. Our clients buy high-end ICs and modules. But they forget that these chips lose value every day. This loss of value is called hardware depreciation4. You must divide the cost of the chip by its useful life. Then, you add the daily power cost. This gives you the real cost of your tokens. Let us look at a simple breakdown.

| Cost Type | What It Means | Impact on Token Cost |

|---|---|---|

| Electricity | Power used by chips and cooling systems | High and continuous |

| Depreciation | Loss of hardware value over time | Fixed but heavy |

| Maintenance | Labor and part replacements | Low but necessary |

I always tell my OEM clients to look at both sides. If you buy a cheap chip, it might use too much power. If you buy a very efficient chip, the upfront cost is huge. You need to find the right balance to keep your token cost low. We guarantee 100% original components because fake parts fail faster. A fake chip increases your replacement costs. It also ruins your depreciation math. You must buy authentic parts from reliable global channels. This keeps your token costs predictable and safe. My team uses 20 years of experience to help you build a stable supply chain. We want your AI servers to run smoothly without breaking your budget.

Why Does Inference Chip Efficiency Decide AI Profit or Loss?

Many AI software startups lose money. They get users, but server costs eat their revenue. Why does your choice of inference chip dictate if your business survives or dies?

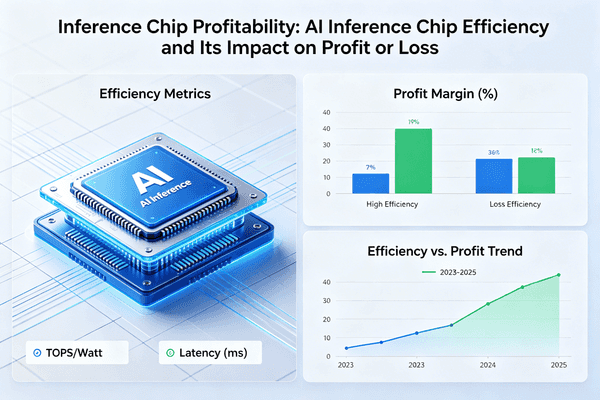

Inference chip efficiency5 directly controls your operating margins. If a chip processes tokens slowly or uses too much power, your cost per user goes up. An efficient chip keeps token generation costs6 lower than your subscription revenue, turning a losing AI software company into a profitable one.

The Survival Equation for AI Companies

I often get calls from procurement managers at AI software companies. They want to find cheaper parts to save money. Their bosses are angry about high cloud costs. I tell them that the cheapest chip is not always the best choice. The real secret is chip efficiency5. Inference is the process where the AI actually answers user prompts. This happens millions of times a day. If your chip is slow, it uses power for a longer time to make one token. This means your cost per token goes up fast. If your users pay a fixed monthly fee, high token costs will eat all your money. You will lose money on every single prompt. This is a huge pain point for the whole industry. At Nexcir, we supply original semiconductors to help solve this problem. We make sure our clients get the right chips. These chips must offer the best speed and power ratio.

| Chip Efficiency Level | Token Cost | Business Result |

|---|---|---|

| Low Efficiency | Higher than revenue | Company loses money |

| Medium Efficiency | Equal to revenue | Company breaks even |

| High Efficiency | Lower than revenue | Company makes a profit |

You must treat inference chips like factory machines. A good machine makes products fast and cheap. A bad machine wastes materials and time. In the AI world, tokens are your final products. You need highly efficient chips to make tokens cheaply. This is the exact way an AI software company can survive today. We use our global supply network to help you pick the right parts. We provide stable pricing so market changes do not ruin your profit margins. When your hardware costs are stable, your software business can grow safely. We also offer technical support to optimize your choices. This lowers your procurement risks and makes your AI company highly competitive.

Will LPUs Take Over the GPU Inference Market by 2026?

GPUs rule the AI market today. But they are costly and hard to get. Could a new architecture like the LPU push GPUs out of the inference game soon?

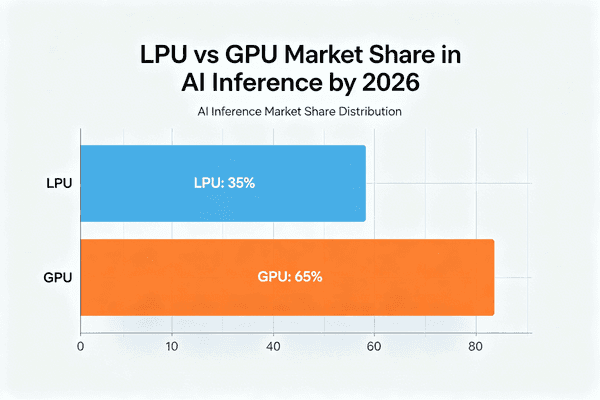

Yes, LPUs are expected to heavily eat into the GPU inference market by 2026. Architectures like Groq are built purely for fast language processing. They offer massive cost advantages, lower latency, and less power use per token compared to traditional GPUs, making them perfect for AI inference tasks7.

The Rise of the Language Processing Unit8

I closely watch market trends for our global supply network. Over the last 20 years, I have seen many big tech shifts. Right now, I see a massive change coming. GPUs rule the AI market today. They are great for training big AI models. But they are not the best choice for inference tasks. GPUs do many things at once. This makes them very complex and power-hungry. LPUs, like the Groq architecture9, are completely different. They do one thing very well. They process language models fast. Because they are simple, they use much less power. They also generate tokens much faster than GPUs. This drops the cost per token significantly. As I talk to hardware engineers today, they are all looking for alternatives to expensive GPUs. They want stable pricing and lower supply risks. By 2026, I heavily believe LPUs will become the new standard for AI inference.

| Feature | Traditional GPU | LPU (e.g., Groq) |

|---|---|---|

| Primary Use | Training and heavy graphics | Fast AI text inference |

| Power Use | Very high | Much lower |

| Token Cost | High | Very low |

| Supply Risk | High (market shortages) | Lower (new market entry) |

At Nexcir, we prepare our OEM clients for this market shift. We help them source the right components for these new LPU architectures. We also help them find safe alternatives for older parts that are hard to buy. If you want to stay ahead in 2026, you must look beyond traditional GPUs. You need to focus on cost-effective inference solutions10 to protect your future profits. We use our trusted global logistics partners to deliver these new chips on time. This keeps your production schedule safe. It also keeps your token generation costs6 low. Our goal is to grow alongside you. We want to help you build a smarter and more connected future with the right hardware.

Conclusion

Token generation cost1s define AI success11. By understanding power, hardware depreciation4, and new chips like LPUs, you can lower expenses and build a profitable, long-term AI business.

Understanding token generation cost helps businesses track AI operating expenses accurately, ensuring financial sustainability. ↩

Exploring server electricity bills reveals how energy consumption affects AI operating expenses, crucial for budget management. ↩

Calculating AI operating expenses is essential for businesses to manage budgets and ensure profitability in AI projects. ↩

Learning about hardware depreciation helps in understanding the long-term costs of AI infrastructure, vital for financial planning. ↩

Chip efficiency directly influences AI profitability by affecting token generation costs, crucial for business success. ↩

Understanding token generation costs is crucial for building a profitable AI business and ensuring long-term success. ↩

Exploring AI inference tasks helps in understanding the operational aspects of AI models and their cost implications. ↩

Learning about LPUs provides insights into emerging technologies that could revolutionize AI inference tasks. ↩

Understanding Groq architecture helps in exploring innovative solutions for efficient AI inference processing. ↩

Exploring cost-effective inference solutions helps in reducing AI operating expenses and improving profitability. ↩

Understanding the impact of token generation costs on AI success is vital for strategic planning and business growth. ↩