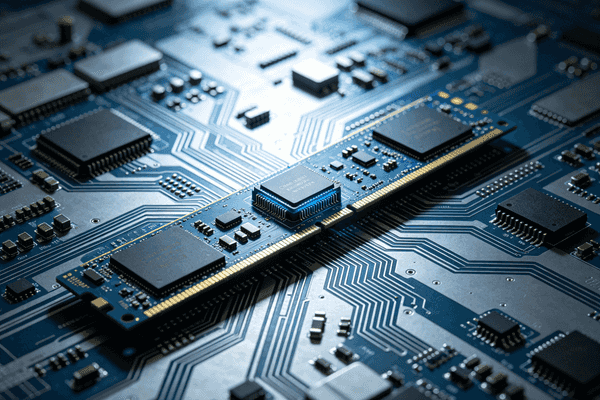

Your CPU often waits for data. This delay wastes time and power in your servers. [Computational memory](https://nexcir.com/finfet-vs-mosfet-why-are-modern-processors-switching-technologies/)1 solves this by processing data right where it lives.

[Computational memory](https://nexcir.com/finfet-vs-mosfet-why-are-modern-processors-switching-technologies/)1 is a technology that adds a small processing unit directly onto the memory module. It handles basic data tasks locally before sending information to the CPU. This reduces data movement, saves energy, and makes the whole system run much faster.

I remember visiting a massive data center2 last year. The cooling fans were loud. I could barely hear myself think. Most of that energy went into simply moving data back and forth. The engineers looked tired. They were replacing burnt-out parts. You might wonder how we can stop this massive waste. The answer lies in changing how memory works. Let me explain the details below.

How Does Adding a Processor to Memory Work?

Moving raw data drains server speed. Your heavy workloads slow down. Putting a small processor on the memory stick stops this heavy traffic.

Adding a processor to memory works by placing a tiny compute chip next to the DRAM. Instead of sending all raw data to the CPU, this local chip filters and processes the data first. It only sends final results to the CPU, saving massive bandwidth.

I will break down this process. In my 20 years in the electronic components industry, I have seen many ways to speed up servers. I have sourced thousands of parts. But this method is very different.

The Old Way vs The New Way

The CPU asks for data. The memory sends the data over a long bus. The CPU does the math. The CPU sends the result back to the memory. This takes a lot of time. With computational memory, the memory does the math itself. The memory has its own brain. It does not need the CPU for simple tasks.

Why Local Processing Matters

Think about a massive database search. You only need one specific file from millions of files. In the old way, you move the whole database to the CPU. The CPU checks every file. In the new way, the memory searches its own files. It only sends the one correct file to the CPU. This is huge for hardware engineers. These engineers buy parts from Nexcir. It changes how we pick ICs for them. We need reliable and 100% original parts for this to work. We avoid counterfeit chips to ensure safety.

Lowering Supply Chain Risks3

When you buy these smart memory modules, you need a trusted supplier. Fake parts will fail. They will ruin your server. At Nexcir, we only use authorized channels. We protect your production schedule.

| Feature | Traditional Memory | Computational Memory |

|---|---|---|

| Data Movement | High | Low |

| CPU Load | Heavy | Light |

| Power Use | Very High | Much Lower |

| Best Use Case | General tasks | Big data tasks |

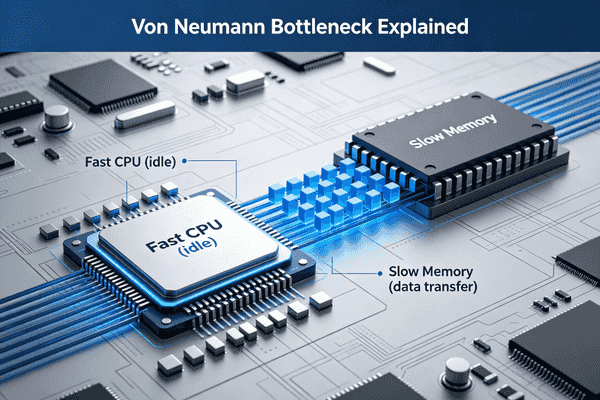

Why Must We Eliminate the Von Neumann Bottleneck?

Your processors are fast. Your memory is slow. This gap creates a huge [data traffic jam](https://medium.com/@mohamedayman23/traffic-jams-inside-your-cpu-the-truth-about-bus-stalling-f38563d06d47)4. We must break the von Neumann bottleneck to keep systems running smoothly.

We must eliminate the von Neumann bottleneck because it limits system performance. The CPU spends too much idle time waiting for data from memory. Removing this bottleneck cuts down unnecessary data movement, lowers power use, and unlocks the true speed of modern processors.

I often talk to OEM procurement managers. They always want to buy faster CPUs. They think a new CPU will fix everything. I tell them the CPU is not the real problem. The road between the CPU and memory is the actual problem.

Understanding the Data Traffic Jam

The von Neumann architecture uses one shared pipe for data and instructions. CPUs got very fast over the years. Memory capacity grew a lot. Memory speed did not keep up at all. This means the fast CPU just sits and waits. It does nothing. It wastes expensive hardware. You pay for speed you cannot use.

The Cost of Moving Data5

Moving data uses more power than doing the actual math. For battery-powered IoT devices6, this is bad. For massive server farms, this power waste is a very big pain point. At Nexcir, we supply many PMICs and MCUs to these farms. We see customers struggling to manage heat. They struggle to manage power costs. Reducing data movement solves this issue directly.

Stable Pricing for New Tech7

Fixing this bottleneck requires new types of chips. Buyers worry about high prices. They worry about market changes. We use our global network to find stable prices. We keep your costs low.

| Problem Area | Impact of Bottleneck | Benefit of Fixing It |

|---|---|---|

| Speed | CPU waits for data | CPU works at full speed |

| Energy | High power to move data | Lower power bills |

| Heat | Motherboard gets hot | Easier cooling |

| Cost | Wasted CPU cycles | Better return on hardware |

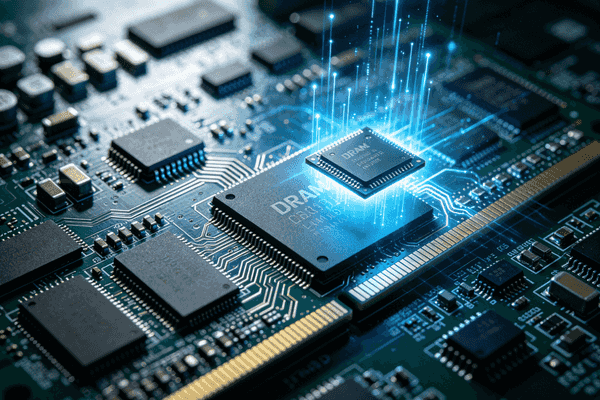

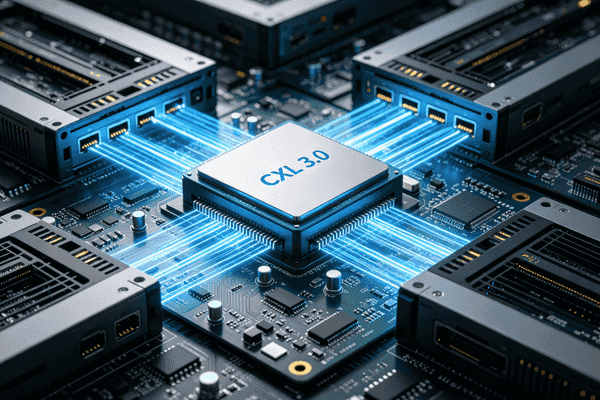

How Will [CXL 3.0](https://investor.marvell.com/news-events/press-releases/detail/1017/marvell-launches-next-generation-cxl-switch-enabling-memory-pooling-to-break-through-the-ai-memory-wall)8 Change Server Motherboard Design by 2026?

Current servers trap memory inside single machines. You waste money on unused RAM. By 2026, [CXL 3.0](https://investor.marvell.com/news-events/press-releases/detail/1017/marvell-launches-next-generation-cxl-switch-enabling-memory-pooling-to-break-through-the-ai-memory-wall)8 will pool memory together to stop this expensive waste.

By 2026, [CXL 3.0](https://investor.marvell.com/news-events/press-releases/detail/1017/marvell-launches-next-generation-cxl-switch-enabling-memory-pooling-to-break-through-the-ai-memory-wall)8 will change server motherboard design by enabling [memory pooling](https://nexcir.com/finfet-vs-mosfet-why-are-modern-processors-switching-technologies/)9. Servers will no longer need local RAM slots for every CPU. Multiple servers will share a large central pool of memory. This design saves physical space, cuts hardware costs, and makes upgrades much easier.

I recently helped a client plan their [future server builds](https://www.trendforce.com/news/2024/01/09/insights-apple-watch-faces-allegations-of-blood-oxygen-monitoring-patent-infringement-resolution-or-redesign-incurs-substantial-costs/)10. They build systems for industrial AI. They were worried about the rising cost of DDR5 memory. They needed a better plan. I told them to look ahead to [CXL 3.0](https://investor.marvell.com/news-events/press-releases/detail/1017/marvell-launches-next-generation-cxl-switch-enabling-memory-pooling-to-break-through-the-ai-memory-wall)8.

The Shift to Memory Pooling11

Servers trap memory today. If Server A needs more memory, you buy a stick. You plug it into Server A. If Server B has extra memory, Server A cannot use it. [CXL 3.0](https://investor.marvell.com/news-events/press-releases/detail/1017/marvell-launches-next-generation-cxl-switch-enabling-memory-pooling-to-break-through-the-ai-memory-wall)8 changes this completely. It puts all memory in one shared box. Any server can borrow memory when it needs it. No memory sits idle.

Impact on Motherboard Space12

Motherboards will look very different soon. Hardware engineers will remove many local DIMM slots. This frees up a lot of physical space. They can add more cooling fans. They can add extra networking chips. As a global distributor, Nexcir is already preparing for this major shift. We are sourcing the new high-speed connectors. We are finding the switches needed for CXL fabrics.

Reliable Delivery for 202613

When 2026 arrives, everyone will want these new parts. Supply lines will get tight. You will need a reliable partner. We have strong logistics. We will deliver these new CXL parts on time.

| Motherboard Element | Current Design (2024) | Future Design (2026) |

|---|---|---|

| Memory Slots | Many slots near CPU | Few or zero local slots |

| Board Size | Large to fit RAM | Smaller and cleaner |

| Connectors | Standard PCIe | High-speed CXL ports |

| Component Focus | Local memory chips | Fabric switches and cables |

Conclusion14

[Computational memory](https://nexcir.com/finfet-vs-mosfet-why-are-modern-processors-switching-technologies/)1 and [CXL 3.0](https://investor.marvell.com/news-events/press-releases/detail/1017/marvell-launches-next-generation-cxl-switch-enabling-memory-pooling-to-break-through-the-ai-memory-wall)8 will fix data bottlenecks. By processing data locally and sharing memory pools, future servers will run faster, save power, and lower your hardware costs.

Explore how computational memory can revolutionize data processing by reducing data movement and saving energy, enhancing system performance. ↩

Learn about the energy challenges faced by data centers and how computational memory can help mitigate these issues. ↩

Find out how to mitigate supply chain risks when acquiring smart memory modules to ensure server reliability. ↩

Find out how data traffic jams slow down CPUs and how computational memory can alleviate this issue. ↩

Understand the financial impact of data movement in server farms and how computational memory can reduce costs. ↩

Explore the impact of data movement on battery life in IoT devices and how computational memory can help. ↩

Explore strategies for maintaining stable pricing for new tech components amidst market fluctuations. ↩

Learn about the upcoming CXL 3.0 technology and its potential to transform server designs by 2026. ↩

Discover the concept of memory pooling and how it can optimize resource usage in servers. ↩

Gain insights into planning server builds with emerging technologies like CXL 3.0 for better efficiency. ↩

Understand the transition to memory pooling and its impact on server architecture and resource management. ↩

Explore how memory pooling will change motherboard design, freeing up space for other components. ↩

Learn about the importance of reliable delivery for new tech components as demand increases in 2026. ↩

Learn how these technologies will enhance server performance, save power, and reduce hardware costs. ↩